Tarantool 2.11 (LTS)

Release date: May 24, 2023

Releases on GitHub: v. 2.11.7, v. 2.11.6, v. 2.11.5, v. 2.11.4, v. 2.11.3, v. 2.11.2, v. 2.11.1, v. 2.11.0

The 2.11 release of Tarantool includes many new features and fixes. This document provides an overview of the most important features for the Enterprise and Community editions.

2.11 is the long-term support (LTS) release with two years of maintenance. This means that you will receive all the necessary security fixes and bug fixes throughout this period, and be able to get technical support afterward. You can learn more about the Tarantool release policy from the corresponding document.

Tarantool provides the live upgrade mechanism that enables cluster upgrade without downtime. In case of upgrade issues, you can roll back to the original state without downtime as well.

To learn how to upgrade to Tarantool 2.11, see Upgrades.

Tarantool Enterprise Edition now supports encrypted SSL/TLS private key files protected with a password. Given that most certificate authorities generate encrypted keys, this feature simplifies the maintenance of Tarantool instances.

A password can be provided using either the new ssl_password URI parameter or in a text file specified using ssl_password_file, for example:

box.cfg{ listen = {

uri = 'localhost:3301',

params = {

transport = 'ssl',

ssl_key_file = '/path_to_key_file',

ssl_cert_file = '/path_to_cert_file',

ssl_ciphers = 'HIGH:!aNULL',

ssl_password = 'topsecret'

}

}}

To learn more, see Traffic encryption.

With 2.11, Tarantool Enterprise Edition includes new security enforcement options.

These options enable you to enforce the use of strong passwords, set up a maximum password age, and so on.

For example, the password_min_length configuration option specifies the minimum number of characters for a password:

box.cfg{ password_min_length = 10 }

To specify the maximum period of time (in days) a user can use the same password, you can use the password_lifetime_days option, which uses the system clock under the hood:

box.cfg{ password_lifetime_days = 365 }

Note that by default, new options are not specified. You can learn more about all the available options from the Authentication restrictions and Password policy sections.

By default, Tarantool uses the CHAP protocol to authenticate users and applies SHA-1 hashing to passwords.

In this case, password hashes are stored in the _user space unsalted.

If an attacker gains access to the database, they may crack a password, for example, using a rainbow table.

With the Enterprise Edition, you can enable PAP authentication with the SHA256 hashing algorithm. For PAP, a password is salted with a user-unique salt before saving it in the database.

Given that PAP transmits a password as plain text, Tarantool requires configuring

SSL/TLS.

Then, you need to specify the box.cfg.auth_type option as follows:

box.cfg{ auth_type = 'pap-sha256' }

Learn more from the Authentication protocol section.

Starting with 2.11, Tarantool Enterprise Edition provides the ability to create read views - in-memory snapshots of the entire database that aren’t affected by future data modifications. Read views can be used to make complex analytical queries. This reduces the load on the main database and improves RPS for a single Tarantool instance.

Working with read views consists of three main steps:

To create a read view, call the

box.read_view.open()function:tarantool> read_view1 = box.read_view.open({name = 'read_view1'})

After creating a read view, you can access database spaces and their indexes and get data using the familiar

selectandpairsdata-retrieval operations, for example:tarantool> read_view1.space.bands:select({}, {limit = 4}) --- - - [1, 'Roxette', 1986] - [2, 'Scorpions', 1965] - [3, 'Ace of Base', 1987] - [4, 'The Beatles', 1960]

When a read view is no longer needed, close it using the

read_view_object:close()method:tarantool> read_view1:close()

To learn more, see the Read views topic.

Tarantool Enterprise Edition now includes the zlib algorithm for tuple compression.

This algorithm shows good performance in data decompression,

which reduces CPU usage if the volume of read operations significantly exceeds the volume of write operations.

To use the new algorithm, set the compression option to zlib when formatting a space:

box.space.my_space:format{

{name = 'id', type = 'unsigned'},

{name = 'data', type = 'string', compression = 'zlib'},

}

The new compress module provides an API for compressing and decompressing arbitrary data strings using the same algorithms available for tuple compression:

compressor = require('compress.zlib').new()

data = compressor:compress('Hello world!') -- returns a binary string

compressor:decompress(data) -- returns 'Hello world!'

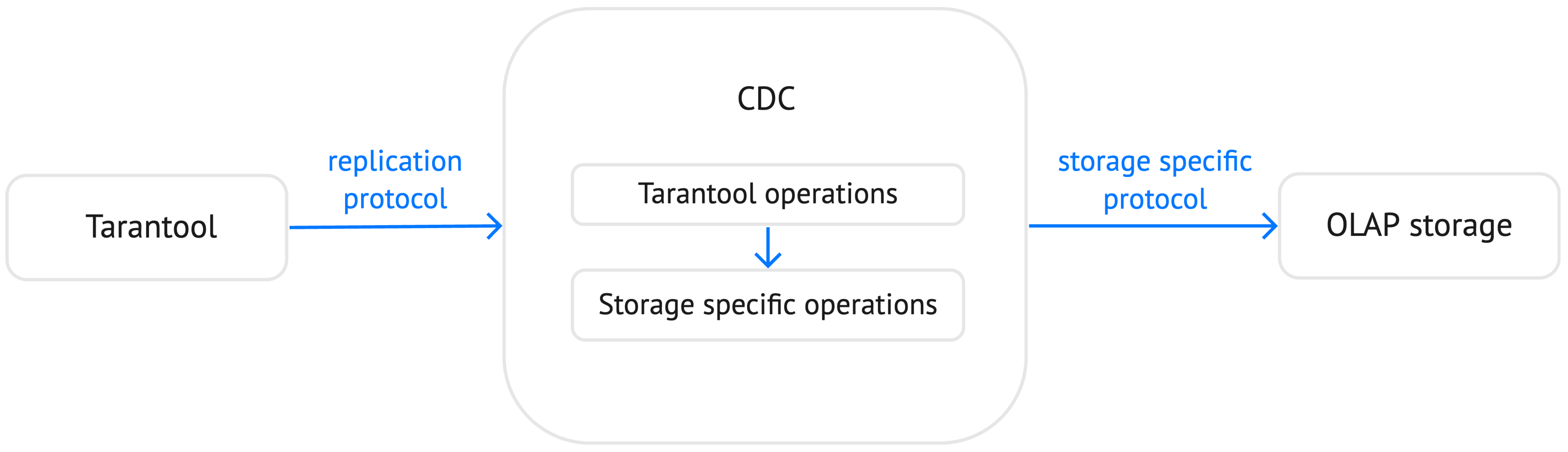

Tarantool can use a write-ahead log not only to maintain data persistence and replication. Another use case is implementing a CDC (Change Data Capture) utility that transforms a data replication stream and provides the ability to replicate data from Tarantool to an external storage.

With 2.11, Tarantool Enterprise Edition provides WAL extensions that add auxiliary information to each write-ahead log record.

For example, you can enable storing old and new tuples for each write-ahead log record.

This is especially useful for the update operation because a write-ahead log record contains only a key value.

See the WAL extensions topic to learn how to enable and configure WAL extensions.

With the 2.11 version, Tarantool supports pagination and enables the ability to get data in chunks.

The index_object:select() and index_object:pairs() methods now provide the after option that specifies a tuple or a tuple’s position after which select starts the search.

In the example below, the select operation gets maximum 3 tuples after the specified tuple:

tarantool> bands.index.primary:select({}, {after = {4, 'The Beatles', 1960}, limit = 3})

---

- - [5, 'Pink Floyd', 1965]

- [6, 'The Rolling Stones', 1962]

- [7, 'The Doors', 1965]

...

The after option also accepts the position of the tuple represented by the base64 string.

For example, you can set the fetch_pos boolean option to true to return the position of the last selected tuple as the second value:

tarantool> result, position = bands.index.primary:select({}, {limit = 3, fetch_pos = true})

---

...

Then, pass this position as the after parameter:

tarantool> bands.index.primary:select({}, {limit = 3, after = position})

---

- - [4, 'The Beatles', 1960]

- [5, 'Pink Floyd', 1965]

- [6, 'The Rolling Stones', 1962]

...

The new after and fetch_pos options are also implemented by the built-in net.box connector.

For example, you can use these options to get data asynchronously.

The 2.11 version provides the ability to downgrade a database to the specified Tarantool version using the box.schema.downgrade() method. This might be useful in the case of a failed upgrade.

To prepare a database for using it on an older Tarantool instance, call box.schema.downgrade and pass the desired Tarantool version:

tarantool> box.schema.downgrade('2.8.4')

To see Tarantool versions available for downgrade, call box.schema.downgrade_versions().

The earliest release available for downgrade is 2.8.2.

In previous Tarantool versions, the replication_connect_quorum option was used to specify the number of running nodes to start a replica set. This option was designed to simplify a replica set bootstrap. But in fact, this behavior brought some issues during a cluster lifetime and maintenance operations, for example:

- Users who didn’t change this option encountered problems with the partial cluster bootstrap.

- Users who changed the option encountered problems during the instance restart.

With 2.11, replication_connect_quorum is deprecated in favor of bootstrap_strategy.

This option works during a replica set bootstrap and implies sensible default values for other parameters based on the replica set configuration.

Currently, bootstrap_strategy accepts two values:

auto: a node doesn’t boot if half or more of the other nodes in a replica set are not connected. For example, if the replication parameter contains 2 or 3 nodes, a node requires 2 connected instances. In the case of 4 or 5 nodes, at least 3 connected instances are required. Moreover, a bootstrap leader fails to boot unless every connected node has chosen it as a bootstrap leader.legacy: a node requires thereplication_connect_quorumnumber of other nodes to be connected. This option is added to keep the compatibility with the current versions of Cartridge and might be removed in the future.

Starting with 2.11, if a fiber works too long without yielding control, you can use a fiber slice to limit its execution time.

The fiber_slice_default compat option controls the default value of the maximum fiber slice.

In future versions, this option will be set to true by default.

There are two slice types - a warning and an error slice:

When a warning slice is over, a warning message is logged, for example:

fiber has not yielded for more than 0.500 secondsWhen an error slice is over, the fiber is cancelled and the

FiberSliceIsExceedederror is thrown:FiberSliceIsExceeded: fiber slice is exceeded

Note that these messages can point at issues in the existing application code. These issues can cause potential problems in production.

The fiber slice is checked by all functions operating on spaces and indexes,

such as index_object.select(), space_object.replace(), and so on.

You can also use the fiber.check_slice() function in application code to check whether the slice for the current fiber is over.

The example below shows how to use fiber.set_max_slice() to limit the slice for all fibers.

fiber.check_slice() is called inside a long-running operation to determine whether a slice for the current fiber is over.

-- app.lua --

fiber = require('fiber')

clock = require('clock')

fiber.set_max_slice({warn = 1.5, err = 3})

time = clock.monotonic()

function long_operation()

while clock.monotonic() - time < 5 do

fiber.check_slice()

-- Long-running operation ⌛⌛⌛ --

end

end

long_operation_fiber = fiber.create(long_operation)

The output should look as follows:

$ tarantool app.lua

fiber has not yielded for more than 1.500 seconds

FiberSliceIsExceeded: fiber slice is exceeded

To learn more about fiber slices, see the Limit execution time section.

Tarantool 2.11 adds support for modules in the logging subsystem.

You can configure different log levels for each module using the box.cfg.log_modules configuration option.

The example below shows how to set the info level for module1 and the error level for module2:

tarantool> box.cfg{log_level = 'warn', log_modules = {module1 = 'info', module2 = 'error'}}

---

...

To create a log module, call the require('log').new() function:

tarantool> module1_log = require('log').new('module1')

---

...

tarantool> module2_log = require('log').new('module2')

---

...

Given that module1_log has the info logging level, calling module1_log.info shows a message but module1_log.debug is swallowed:

tarantool> module1_log.info('Hello from module1!')

2023-05-12 15:10:13.691 [39202] main/103/interactive/module1 I> Hello from module1!

---

...

tarantool> module1_log.debug('Hello from module1!')

---

...

Similarly, module2_log swallows all events with severities below the error level:

tarantool> module2_log.info('Hello from module2!')

---

...

The HTTP client now automatically serializes the content in a specific format when sending a request based on the specified Content-Type header and supports all the Tarantool built-in types.

By default, the client uses the application/json content type and sends data serialized as JSON:

local http_client = require('http.client').new()

local uuid = require('uuid')

local datetime = require('datetime')

response = http_client:post('https://httpbin.org/anything', {

user_uuid = uuid.new(),

user_name = "John Smith",

created_at = datetime.now()

})

The body for the request above might look like this:

{

"user_uuid": "70ebc08d-2a9a-4ea7-baac-e9967dd45ac7",

"user_name": "John Smith",

"created_at": "2023-05-15T11:18:46.160910+0300"

}

To send data in a YAML or MsgPack format, set the Content-Type header explicitly to application/yaml or application/msgpack, for example:

response = http_client:post('https://httpbin.org/anything', {

user_uuid = uuid.new(),

user_name = "John Smith",

created_at = datetime.now()

}, {

headers = {

['Content-Type'] = 'application/yaml',

}

})

You can now encode query and form parameters using the new params request option.

In the example below, the requested URL is https://httpbin.org/get?page=1.

local http_client = require('http.client').new()

response = http_client:get('https://httpbin.org/get', {

params = { page = 1 },

})

Similarly, you can send form parameters using the application/x-www-form-urlencoded type as follows:

local http_client = require('http.client').new()

response = http_client:post('https://httpbin.org/anything', nil, {

params = { user_id = 1, user_name = 'John Smith' },

})

The HTTP client now supports chunked writing and reading of request and response data, respectively. The example below shows how to get chunks of a JSON response sequentially instead of waiting for the entire response:

local http_client = require('http.client').new()

local json = require('json')

local timeout = 1

local io = http_client:get(url, nil, {chunked = true})

for i = 1, 3 do

local data = io:read('\r\n', timeout)

if len(data) == 0 then

-- End of the response.

break

end

local decoded = json.decode(data)

-- <..process decoded data..>

end

io:finish(timeout)

Streaming can also be useful to upload a large file to a server or to subscribe to changes in etcd using the gRPC-JSON gateway.

The example below demonstrates communication with the etcd stream interface.

The request data is written line-by-line, and each line represents an etcd command.

local http_client = require('http.client').new()

local io = http_client:post('http://localhost:2379/v3/watch', nil, {chunked = true})

io:write('{"create_request":{"key":"Zm9v"}}')

local res = io:read('\n')

print(res)

-- <..you can feed more commands here..>

io:finish()

Linearizability of read operations implies that if a response for a write request arrived earlier than a read request was made, this read request should return the results of the write request.

Tarantool 2.11 introduces the new linearizable isolation level for box.begin():

box.begin({txn_isolation = 'linearizable', timeout = 10})

box.space.my_space:select({1})

box.commit()

When called with linearizable, box.begin() yields until the instance receives enough data from remote peers to be sure that the transaction is linearizable.

There are several prerequisites for linearizable transactions:

- Linearizable transactions may only perform requests to synchronous, local, or temporary memtx spaces.

- Starting a linearizable transaction requires box.cfg.memtx_use_mvcc_engine to be set to

true. - The node is the replication source for at least

N - Q + 1remote replicas. HereNis the count of registered nodes in the cluster andQis replication_synchro_quorum. So, for example, you can’t perform a linearizable transaction on anonymous replicas.

Tarantool is primarily designed for OLTP workloads. This means that data reads are supposed to be relatively small. However, a suboptimal SQL query can cause a heavy load on a database.

The new sql_seq_scan session setting is added to explicitly cancel full table scanning.

The request below should fail with the Scanning is not allowed for 'T' error:

SET SESSION "sql_seq_scan" = false;

SELECT a FROM t WHERE a + 1 > 10;

To enable table scanning explicitly, use the new SEQSCAN keyword:

SET SESSION "sql_seq_scan" = false;

SELECT a FROM SEQSCAN t WHERE a + 1 > 10;

In future versions, SEQSCAN will be required for scanning queries with the ability to disable the check using the sql_seq_scan session setting.

The new behavior can be enabled using a corresponding compat option.

Leader election is implemented in Tarantool as a modification of the Raft algorithm. The 2.11 release adds the ability to specify the leader fencing mode that affects the leader election process.

Note

Currently, Cartridge does not support leader election using Raft.

You can control the fencing mode using the election_fencing_mode property, which accepts the following values:

- In

softmode, a connection is considered dead if there are no responses for4 * replication_timeoutseconds both on the current leader and the followers. - In

strictmode, a connection is considered dead if there are no responses for2 * replication_timeoutseconds on the current leader and4 * replication_timeoutseconds on the followers. This improves the chances that there is only one leader at any time.